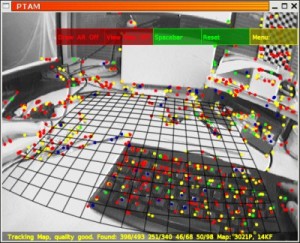

PTAM augmented reality software

Augmented reality (AR) combines a live view of a physical real-world environment combined with virtual, computer-generated images creating a mixed (augmented) view.

Annotated images in a TV programme (such as sports scoring) are an early example of AR.

Current applications include the use of avatars placed in the real-world environment to offer advertisement or other information to the user. The advent of smartphones, equipped with a camera, has provided a platform that can be exploited in a number or ways to deliver internet-based content to users in a novel and appealing way.

The Oxford parallel tracking and mapping (PTAM) software is a camera tracking system that establishes a ground plane (or any other horizontal surface) in a real-world video feed, which can be used to supplement the live image with stable 3D augmentations. The Oxford PTAM software requires no markers, pre-made maps, scene templates, or inertial sensors.

The core system is designed to track a hand-held camera in a small workspace. Tracking and mapping are processed in parallel; one thread deals with the task of robustly tracking erratic hand-held motion, while the other produces a 3D map of point features from previously observed video frames. This allows the use of batch optimisation techniques not usually associated with real-time operation.

The result is a system that produces detailed maps with thousands of landmarks which can be tracked at frame-rate, with an accuracy and robustness rivalling that of state-of-the-art model-based systems.

An extension to the core software called PTAMM (parallel tracking and multi-mapping) allows maps of many environments to be made and different augmentations to be associated with each. As the user explores the real world, the system is able to automatically re-localise into previously mapped areas and control the desired augmentation.

about this technology